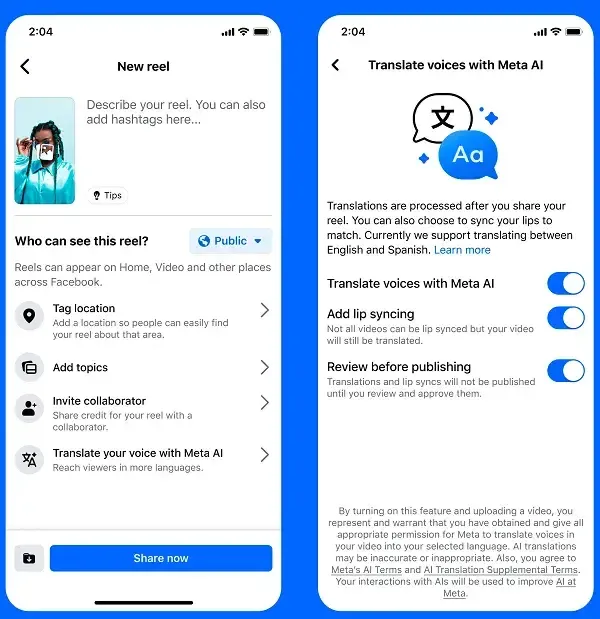

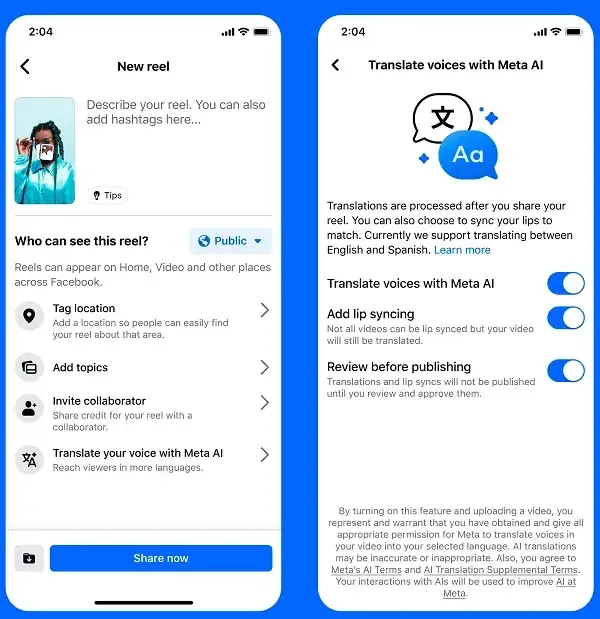

Meta is expanding content accessibility in its apps by introducing AI-powered voice translation. This technology not only translates audio but also synchronizes lip movements with the translated speech, creating a more natural viewing experience.

Meta AI Voice Translation

According to Meta, the system uses your own voice’s tone and sound, combined with AI lip-syncing, to make it appear as if you are truly speaking the translated language.

For example, Facebook users can now watch a video in Spanish or English, while the speaker’s lip movements remain in sync with the translated audio.

How It Works

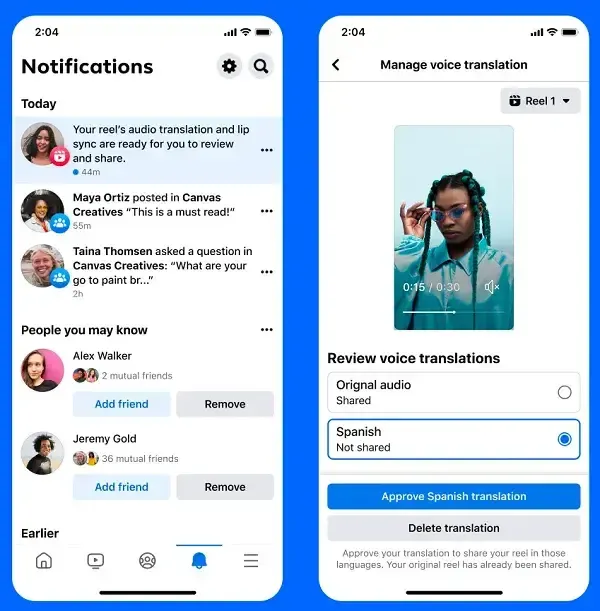

- Creators can enable or disable the “Translate your voice with Meta AI” option in the Reels composer.

- You can choose to add lip-sync with translation or simply translate the audio without modifying visuals.

- Before publishing, creators can preview the translation and accept or reject it.

When published, viewers will be notified that the reel has been translated with Meta AI and can select their preferred language.

Supported Languages

- Initially, Meta supports English ↔ Spanish translations.

- More languages will be added in the near future to broaden accessibility.

Best Practices for Accuracy

To improve translation quality, Meta recommends:

- Limiting videos to a maximum of two speakers.

- Avoiding overlapping voices or heavy background music.

- Remembering that building an audience in a new language may take time, similar to starting fresh.

Extra Feature: Manual Dubbing

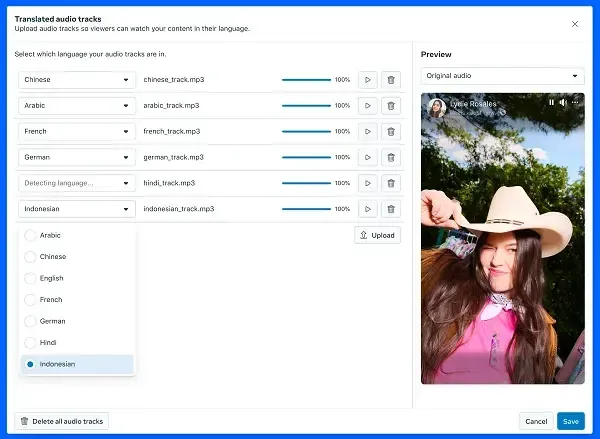

In addition to AI translation, Meta now allows Page managers to add up to 20 manual dubbed tracks to a single Reel.

- Viewers will hear the Reel in their preferred language.

- Unlike AI translations, manual dubbed tracks will not lip-sync with the speaker.

Conclusion

While not perfect, this tool opens up opportunities for creators to reach a much wider global audience. With AI voice translation and lip-sync features, Meta is breaking down language barriers and delivering a more authentic, multilingual experience.